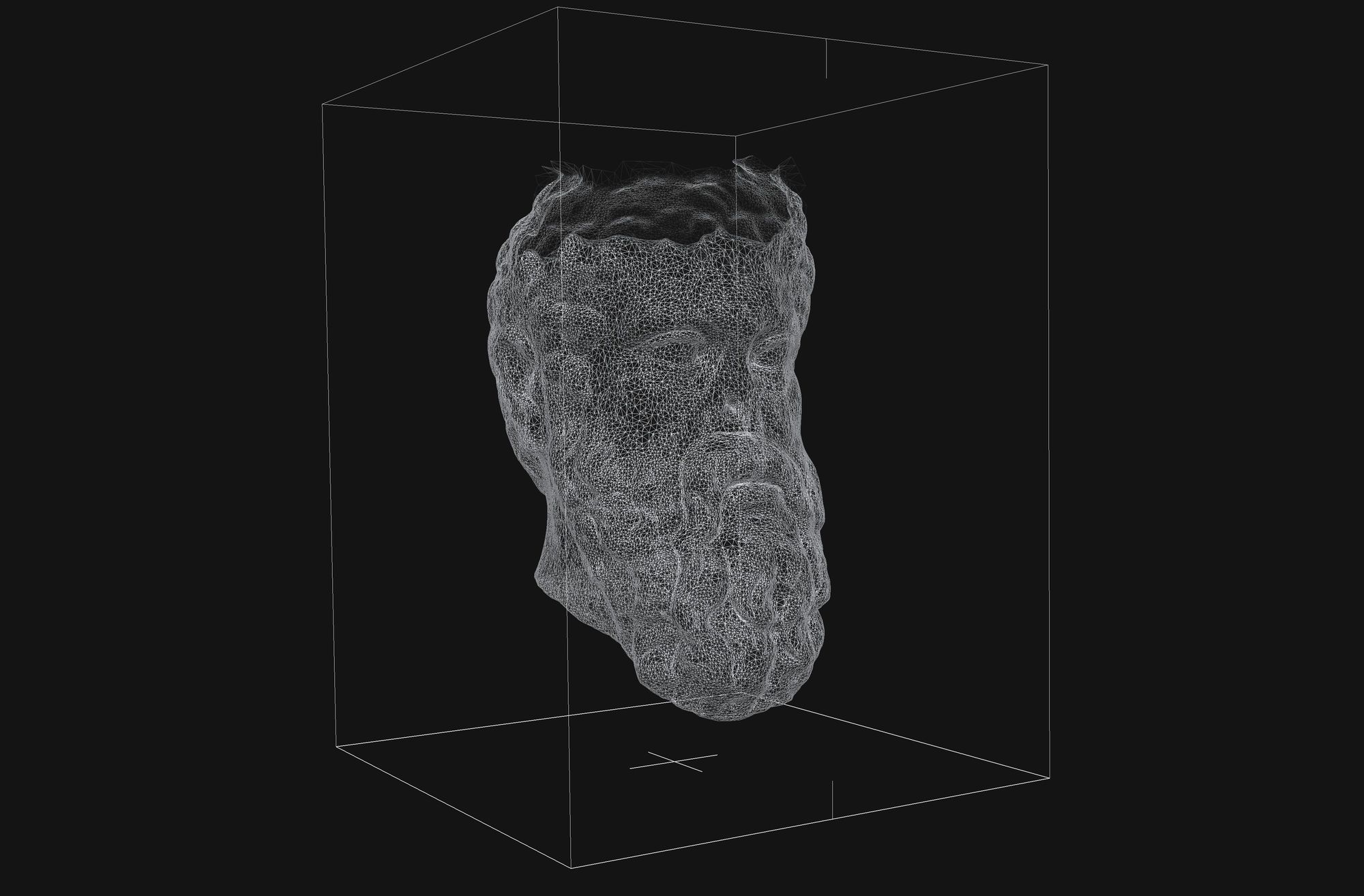

Ultimately, our Code Art Studio (UnbanksyTV DAO) is researching a novel way of applying ML to accelerate the 3D design process. This approach takes the first steps towards a future where designers can work together with ML algorithms to focus on the creative aspects and iterating rapidly on designs in order to achieve results on an unprecedented scale.

We will create a custom generative algorithm to create 3D characters, by merging various OBJ files. I’m interested in creating 3333 3D characters, but I understand doing them 1 by 1 will take forever. Hence why I’m looking into various ways to create a custom generative algorithm to help me create them as OBJ files to create an anatomically aware facial animation.

"Recent advances in Generative Adversarial Networks (GANs) have shown impressive results for the task of facial expression synthesis. The most successful architecture is StarGAN, that conditions GANs generation process with images of a specific domain, namely a set of images of persons sharing the same expression.

While effective, this approach can only generate a discrete number of expressions, determined by the content of the dataset. To address this limitation, in this paper, we introduce a novel GAN conditioning scheme based on Action Units (AU) annotations, which describes in a continuous manifold the anatomical facial movements defining a human expression.

Our approach allows controlling the magnitude of activation of each AU and combines several of them. Additionally, we propose a fully unsupervised strategy to train the model, that only requires images annotated with their activated AUs, and exploit attention mechanisms that make our network robust to changing backgrounds and lighting conditions. The extensive evaluation shows that our approach goes beyond competing conditional generators both in the capability to synthesize a much wider range of expressions ruled by anatomically feasible muscle movements, as in the capacity of dealing with images in the wild." source

If you are interested in the subject, take a look. It’s quite the rabbit hole. For PyTorch users looking to try some 3D deep learning themselves, the Kaolin library is worth looking into. For TensorFlow users, there is also TensorFlow Graphics. A particularly hot subfield is the generation of 3D models. Creatively combining 3D models, rapidly producing 3D models from images, and creating synthetic data for other machine learning applications and simulations are just a handful of the myriad use cases for 3D model generation.

In the 3D deep learning research field, however, choosing an appropriate representation for your data is half the battle. In computer vision, the structure of data is very straightforward: images made up of dense pixels that are arranged neatly and evenly into a precise grid. The world of 3D data has no such consistency. These drawbacks motivated the researchers at DeepMind to create PolyGen, a neural generative model for meshes that jointly estimates both faces and vertices of a model to directly produce meshes. Implementation on available: DeepMind GitHub.

HOW DO I BUY A ?

3333 will be available for purchase. They will be .33 sOHM each, which does not include gas fees. Please make sure that you have a cryptocurrency wallet, such as Metamask, installed on your browser and that you have enough sOHM for the number of you'd like to purchase, including ETH for gas fees. Gas fees are charged by Ethereum miners and can vary based on the number of transactions being processed. After all 3333 from the launch sell out, you will be able to purchase visitors on the secondary market on Opensea.

WHEN CAN I BUY?

Visitors will launch on September 3th, 2021 at 3:33PM EST

HOW WERE THEY CREATED?

Each one is programmatically generated from 333 unique traits, including eyewear, expression, eyes, mouth, nose, neck, hat, clothing, background and skin. While each visitor is unique, some will be rarer than others. Each Visitor is stored as an ERC-721 token on the Ethereum blockchain and is hosted on IPFS.

WILL THE LAUNCH BE FAIR?

Yes. All 3333 will be available upon launch. No one will have early access.

HOW DO I CONTACT THE GENESIS SQUAD?

Jump into our discord.